AI Waves # 3: A Google algorithm crashed memory stocks. An 1865 paradox explains why they’ll recover.

You will never own enough compute.

April 1, 2026 | Nazaré Ventures

Hi friend of Nazaré,

The news feels so strange at times its hard to know if its April fools or not.

On Tuesday, Google Research published TurboQuant, a compression algorithm that shrinks LLM working memory sixfold and speeds up attention computation eightfold on H100s. No retraining required. No measurable accuracy loss.

SK Hynix fell 6%. Samsung dropped 5%. Micron and Sandisk followed. The logic: if software compresses AI memory sixfold, fewer chips ship.

This logic has been wrong for 161 years.

Jevons’ Paradox

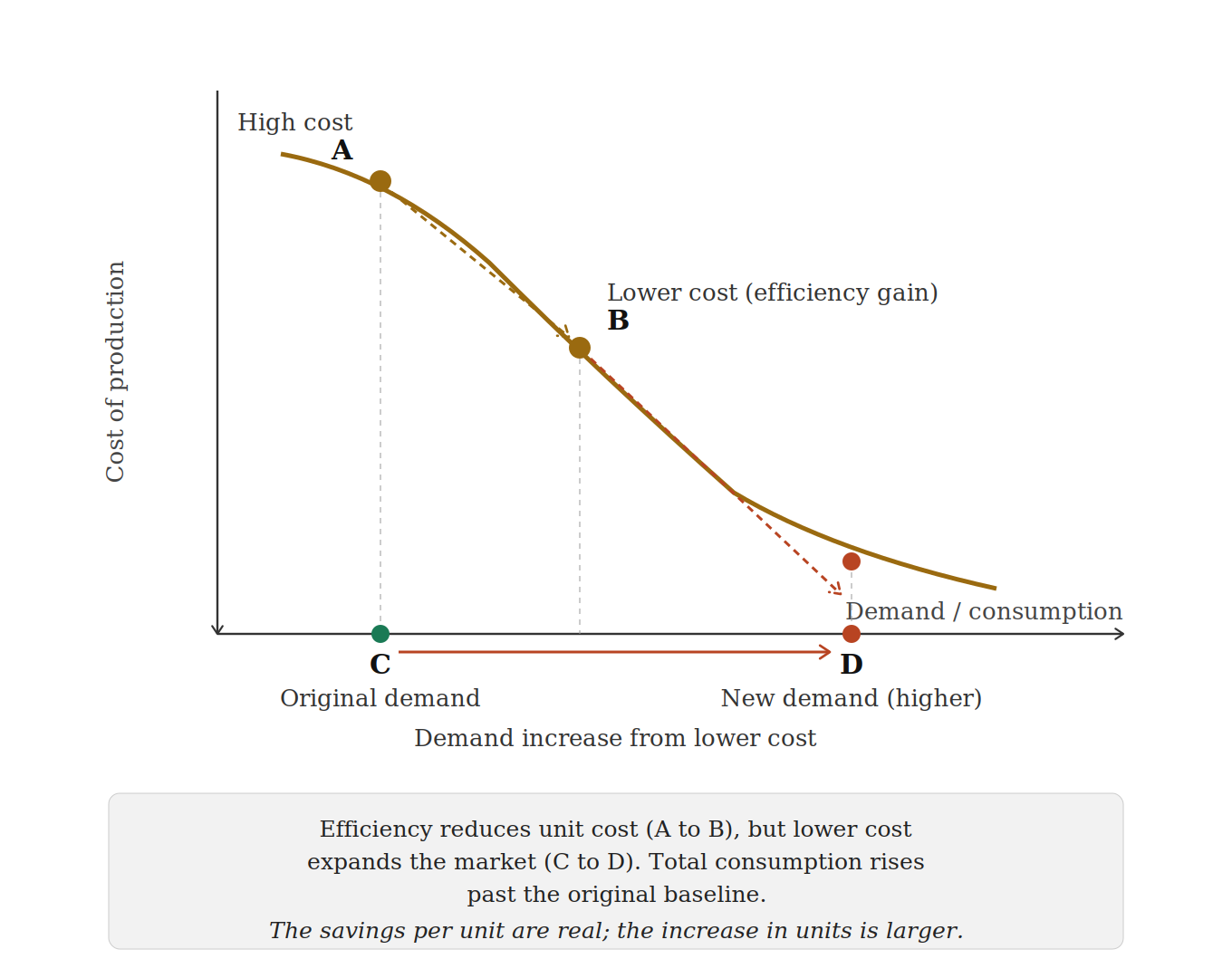

In 1865, William Stanley Jevons published The Coal Question. He observed that James Watt’s more efficient steam engine had not reduced Britain’s coal consumption. It had increased it. Efficiency made steam power viable for new applications. Factories multiplied. Coal demand quadrupled.

The pattern has repeated through every computing cycle. Moore’s Law did not reduce chip purchases. It created the personal computer, the smartphone, and the cloud. H.264 did not reduce bandwidth. It created Netflix. DeepSeek’s efficient training did not reduce GPU demand. It opened frontier AI to thousands of teams that could not afford it before. Satya Nadella wrote “Jevons paradox strikes again” after DeepSeek. He was right.

Lower cost per unit expands the addressable market. The expanded market drives total consumption past the original baseline.

TurboQuant will follow the same path. A model that required 48GB of VRAM for a 100,000-token conversation now fits in 8GB. That is frontier AI running on a MacBook, a phone, an edge device in a factory or a hospital. Every device that could not run a serious model yesterday can run one tomorrow. That is not demand reduction. It is demand expansion at a different order of magnitude.

What TurboQuant Actually Does

If you watched Silicon Valley, think of it as Pied Piper but real. TurboQuant compresses the memory that AI models use to remember earlier parts of a conversation. It does not change the model itself or make it smaller. It just makes the notepad the model writes on take up far less space.

The result: the same model, on the same hardware, can now hold four to six times more context. A model that could read a chapter can now read a book. A model that could review a function can now review a codebase. Community implementations appeared on GitHub before the market opened the following morning. Cloudflare’s CEO called it “Google’s DeepSeek moment.”

One nuance that matters: TurboQuant compresses inference memory, not training memory. The chips needed to train new models are untouched. HBM demand for training clusters has not changed. TrendForce has revised its DRAM price forecasts upward twice, now projecting contract prices up 90-95% quarter on quarter.

The Personal Angle

I have been betting on algorithms for 30 years.

My PhD at Cambridge focused on neural network training efficiency, specifically scaling speech recognition models using Mixtures of Experts, the same architecture DeepSeek made famous three decades later. At NASA Ames, I built models for space debris tracking and Mars rover data analysis. The constraint was always the same: too little compute, so you had to be clever with the mathematics.

Every algorithmic breakthrough since has produced the same debate. “This will reduce the need for hardware.” And every time, the opposite happened.

This is the core of what we call the MACHA thesis. Make AI Cheap Again. Algorithmic efficiency does not shrink the market. It detonates it.

The bet against memory chips after an efficiency breakthrough is the same bet made, and lost, since 1865. Watt built a better engine. Britain burned more coal. Google built a better compressor. The world will run more AI.

We keep betting on the algorithms.

A Note on Google

The popular AI narrative focuses on OpenAI product launches and Anthropic safety research. This is odd, given that Google invented the transformer, built BERT, T5, PaLM, and Gemini, and now TurboQuant. The team, led by Amir Zandieh and Vahab Mirrokni (Google Fellow) with collaborators at DeepMind, NYU, and KAIST, produced work that approaches the information-theoretic limit for vector quantization. The deepest technical moats are built in research labs, not product demos.

This Week in AI

OpenAI shut down Sora. The video generation tool peaked at roughly one million users after launch, then collapsed to fewer than 500,000 while burning approximately $1 million per day. The Disney $1 billion partnership died with it. OpenAI redirected the compute toward robotics. Without efficiency breakthroughs, compute-heavy AI products are uneconomic.

Anthropic’s next model leaked. A misconfigured CMS left nearly 3,000 unpublished assets publicly accessible, including draft posts describing a model called Claude Mythos (internal codename Capybara). Anthropic confirmed it: “the most capable we’ve built to date,” a step change in reasoning, coding, and cybersecurity. The draft warned of “unprecedented cybersecurity risks” and described the model as “currently far ahead of any other AI model in cyber capabilities.” Anthropic is privately briefing government officials. The rollout will be deliberately slow. The irony of a safety-focused company leaking its own model via a CMS error is noted.

Mistral secured $830 million in debt financing for a data centre near Paris, powered by 13,800 NVIDIA GB300 GPUs. Revenue grew from $20 million to $400 million ARR in one year. Target: $1 billion ARR by year end, 200 megawatts of European compute capacity by end of 2027. This is the Red Hat thesis from Root Access playing out.

MCP crossed 97 million installs. Anthropic’s Model Context Protocol reached 97 million monthly SDK downloads in March. Kubernetes took four years to reach comparable deployment density. Every major AI provider now ships MCP-compatible tooling as default. The protocol layer is settled. Competition has moved to orchestration and security above it.

OpenAI raised $110 billion at a $730 billion valuation from Amazon, NVIDIA, and SoftBank.

Block cut 40% of its workforce. Jack Dorsey cited AI tools enabling smaller, more efficient teams. Jevons’ Paradox applied to labour: efficiency reduces demand for humans, not for AI. Every company that downsizes because of AI tooling becomes a larger buyer of AI infrastructure.

Meta and AMD formalised a $60 billion AI chip partnership tied to a 6-gigawatt GPU rollout. While markets panic about TurboQuant reducing chip demand, actual buyers are signing the largest hardware deals in history.

Portfolio

Prime Intellect released prime-rl v0.5.0, their largest update to date, with over 200 commits from 22 contributors. The headline feature is disaggregated prefill-decode inference, separating prefill and decode phases across dedicated GPU pools. This is the architecture that makes agentic RL training work at scale. New model support includes GLM-5, Qwen3.5 MoE, Nemotron-H, MiniMax M2.5, and GPT-OSS. On April 9, Prime Intellect is co-hosting a systems hackathon in Paris with GPU MODE and PyTorch Foundation, immediately following PyTorch Conference Europe. Two tracks: distributed training and inference optimisation. Access to B300 clusters from Verda and H200s from Sesterce.

Vast.ai was featured on the ProductLed podcast this week. The episode covers how the company scaled from a niche GPU marketplace to powering inference workloads for teams worldwide, with over 20,000 GPUs on the platform. The key insight: as inference gets cheaper, usage increases rather than levelling off. Lower cost makes new use cases viable, new use cases bring in more users. It is Jevons’ Paradox applied directly to compute, and Vast.ai sits at the centre of that flywheel.