Models Aren’t Moats

General intelligence creates the value. Specialized intelligence might capture it.

Today we discuss the “moat” as it relates to AI. Will frontier labs eat everything? If not, what’s the thing that keeps an AI-native business afloat, the labs at bay, and replication (aka “Sherlocking”) difficult enough to discourage it.

Let’s first agree that value will accrue somewhere in the age of AI. Maybe the frontier labs do end up building AGI or ASI, in which case the entire question of moats becomes moot, and so does most other planning. That’s a real possibility, not a negligible one. But it’s the one branch where nothing anyone builds matters, so this piece is about the other branch, the far wider one, where AI is transformative but not total.

What’s far more likely is that specialization, not general-purpose intelligence, is where the value accrues.

Failing Precedent & The Veil of General Intelligence

Part of what’s so difficult about predicting the moat for AI is that historical precedents seem ill-equipped to model value accrual for AI-native enterprises. The frontier labs seem to possess many of the classic defensible “moats” like network effects, switching costs, scale economies, brand, and IP, all of which point to their continued future dominance.

If “frontier labs will eat everything,” why bet against them? Why fund anything with even a remote chance of getting “sherlocked?” This reminds me of how hard it was to fund anything consumer-facing in the 2010’s for fear of getting put out of business by the social media behemoths. Consequently, we’re witnessing a continued boom in everything frontier-lab related. Compute, memory, raw materials, shares of the frontier labs themselves, they’re all ripping higher together as they punish incumbents perceived to be threatened or vulnerable.

Dave Eggers saw the endpoint of this a decade ago. In The Circle, the dominant platform has so completely captured its ecosystem that startups are no longer built to stand on their own, they are founded to be acquired by The Circle, because there is no longer any other outcome available to them. That is the world the “frontier labs will eat everything” thesis quietly assumes: a market where the only rational exit is absorption, and where independence is not even attempted. It is worth asking whether that assumption holds.

The problem is that the labs’ perceived “general” dominance is almost certainly only temporary.

Although the labs would have us believe that all-powerful general-purpose intelligence is the ultimate goal, recent developments betray their fickle conviction. Omnipotent AGI/ASI is still largely a marketing and fundraising tactic.

What is machine god worth? Enough to justify any valuation you want.

You can read this as part of the reason they’re rushing to go public: we’re still early enough that the general-intelligence value-accrual thesis can’t yet be invalidated.

And that’s the ultimate point. What’s clear is we’re extremely early to this new technological revolution, and nobody has had time to adjust. Machines accelerate faster than humans, who have cognitive, cultural, and institutional half-lives. We metabolize change more slowly, requiring reflection, context, sometimes even over-reaction (see: the markets), and recovery.

The notion that “frontier labs will eat everything” is based on the astonishing power, competence, and novelty of general-purpose artificial intelligence. What the frontier labs have done is nothing short of extraordinary, and they deserve all the hype (if not necessarily the valuation).

But that does not mean all future value will accrue to general-purpose intelligence providers. All it means is that using AI is now the baseline. We need to reinvent almost everything to incorporate AI in its most native form, but how it gets used, and who benefits from it, remains to be seen.

Specialization: “Harnesses” & the Application Layer

In February 2025, Benedict Evans wrote that the frontier labs have

“no moat or defensibility except access to capital, they don’t have product-market fit outside of coding and marketing, and they don’t really have products either, just text boxes, and APIs for other people to build products.”

He was still making the same argument on stage at Fortune’s Brainstorm AI in London that May.

In September 2025, Andrew Chen (GP @ a16z) wrote:

“The next few years will be about the folks who build the business logic that sits on top of these models. They won’t do AI research or train their own foundation models. Instead, they’ll be model-agnostic, creating a compelling UI that sits on top.”

Both analyses point to models themselves becoming commodities, meaning value would accrue to something other than the model-providers themselves. “Commoditization” is the classic rebuttal to foundational products or platforms accruing any kind of meaningful value: conventional wisdom is that value accrues at the application layer on top of them.

The most useful form of AI today is general intelligence (read: one of the frontier models) optimized for a particular context, user, workflow, or subject matter. That kind of optimization has historically lived in “the prompt,” which has morphed into “the skills.” Prompt engineering involved designing the right query to coax chatbots into returning useful information. Skills are like automated prompts that live in markdown files agents can read to perform certain actions without being prompted.

Either way, LLMs are incredible context machines, meaning that with sufficiently specialized context, data, and objectives they can be extraordinarily effective, especially as increasingly-powerful agents continue to come online. “Applied AI” is in many cases properly incorporating that context in as effective and efficient a way as possible.

At the moment, applications are thus increasingly taking the form of what’s being called a “harness.”

A harness is the intermediate layer between user and foundation model. It takes general model capability and turns it into a specific, usable framework for a particular context, user, workflow, or industry.

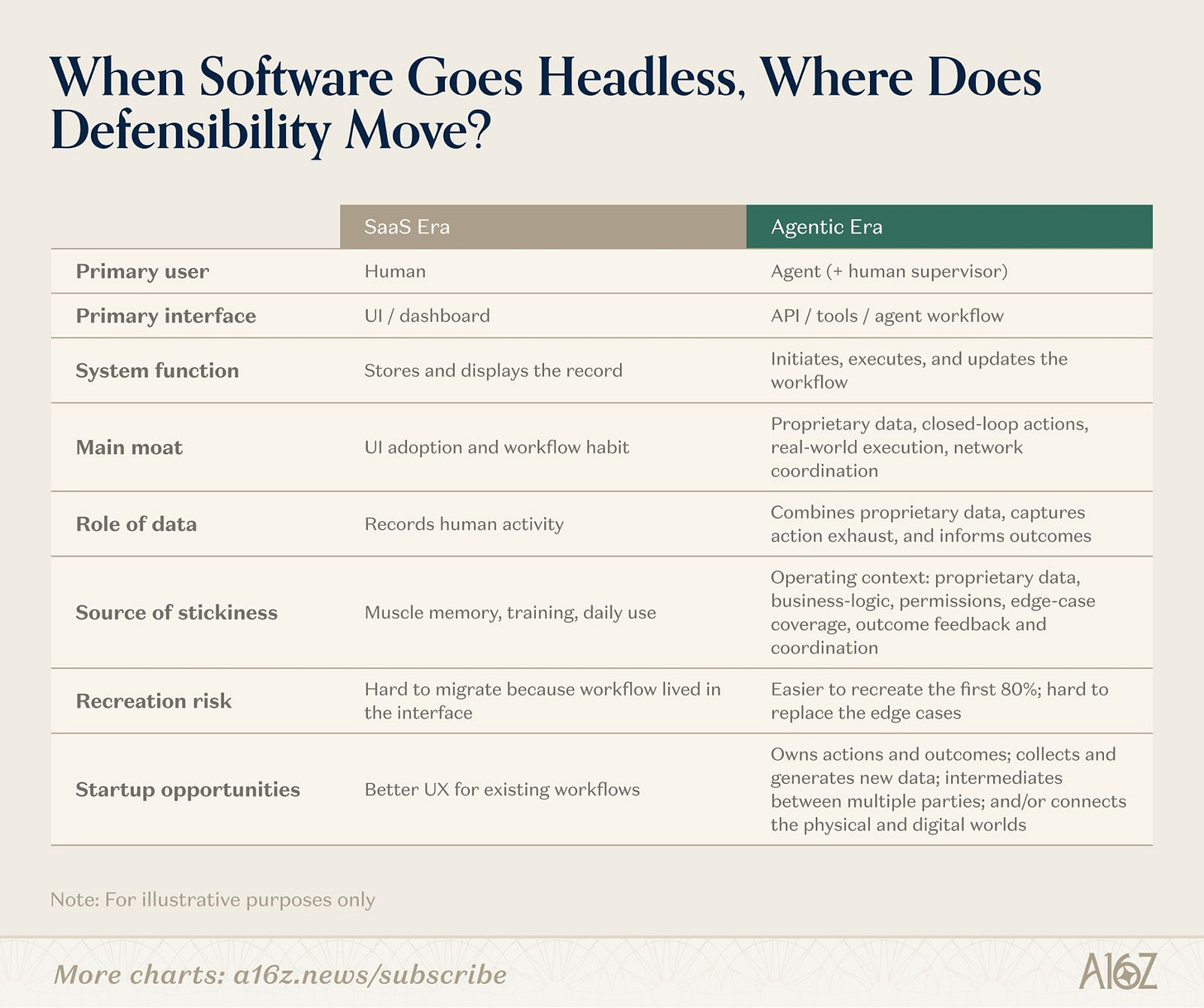

a16z “When Software Goes Headless”

Whatever the industry settles on calling this “layer” (a harness, a wrapper, an agent, a platform, etc.) the thing being described is the architecture that focuses the underlying model, prioritizes the user it’s built for, and optimizes for the objective it’s pursuing at the same time.

The moat is that specialized architecture. Value will accrue to the companies who build the best versions of specialized intelligence.

Legible Labs

If you look closely, the frontier labs themselves have been telling us this. When Karpathy and the rest of the industry say that “agentic coding” shifted around November 2024, it’s no coincidence that it was effectively the first successful implementation of a model+harness trained together. The models were exceptional, but so was the specialty software built to use them.

Cursor deserves some credit here, because they pioneered the AI programming paradigm. The models were great, but Cursor was better because of their harness: what they built around the models.

It would be dishonest to cite Cursor as pure validation, though, because Cursor is also the clearest warning in the space. They grew into abysmal unit economics, paying retail prices for inference while marking up a thin spread, and watched the labs they depend on enter their exact vertical with Claude Code and Codex. Their response is the instructive part. Rather than accept life as a permanent reseller, Cursor’s parent company Anysphere built its own coding model, Composer, shipping three generations between October 2025 and March 2026, each cheaper to run than the frontier models it had been routing to. Composer is not a frontier model trained from scratch, it is a specialized model built on an undisclosed base and tuned hard for the work an IDE actually does. But building it well enough to matter required training compute at a scale Cursor did not have. In April 2026, Anysphere granted SpaceX an option to acquire the company for $60 billion later in the year, or to pay $10 billion for the partnership alone, explicitly so it could train Composer on xAI’s Colossus infrastructure. The best independent harness in the most valuable vertical moved down the stack to escape lab dependence, hit a compute wall doing it, and ended up holding an offer to be absorbed into a vertically integrated stack instead.

That arc reads like a refutation of the entire harness thesis. It isn’t, but it is worth being precise about why. Coding is the one vertical where the lab reaches the buyer through the identical motion it uses to sell model access, where the lab has the deepest in-house expertise, and where there is no liability, no regulatory wall, and no proprietary customer data the lab can’t see. Cursor was the most exposed harness in the most exposed vertical. It built where every structural advantage ran the lab’s way, and even moving at a velocity few companies could match, the gravity still pulled it in. The lesson is not that every harness gets sherlocked or swallowed. It is the opposite: Cursor is the boundary case that defines the rule. The harnesses that stay independent are the ones built where the lab structurally can’t or won’t follow, which is the rest of this piece.

In fact, Anthropic’s entire push into the “enterprise” is a tacit acceptance of specialization: an admission that although the quality of the models matters, it is not enough to succeed. After building Claude Code, for example, they continued with Claude Cowork, both harnesses designed in unique ways to optimize the underlying Claude models for different types of knowledge work output.

Most recently, Anthropic launched a $1.5 billion joint venture with Blackstone, Hellman & Friedman, and Goldman Sachs, with General Atlantic and Apollo among the other participants, to act as a consulting arm helping mid-sized businesses, including the PE firms’ portfolio companies, integrate Claude into their operations.

Almost instantly (a week later) OpenAI launched its own Deployment Company with $4 billion in initial funding from a 19-firm consortium led by TPG, with co-lead capital from firms like Bain Capital and Brookfield, and, tellingly, McKinsey and other major consultancies joining as services partners. It brought in Tomoro’s roughly 150 forward-deployed engineers on day one.

Both labs use language in their release related to specialization. Ever the sharp observer, Ethan Mollick immediately said the quiet part out loud.

The harness builders themselves see it the same way. When Anthropic shipped its legal plugins, Max Junestrand, whose company Legora builds a vertical legal platform on Anthropic’s models, responded publicly that better foundation models improve what his product can deliver, and that he welcomes the progress.

His framing: the model is necessary but insufficient. Enterprise legal work, he wrote, requires contextual understanding, auditability, integrations, ethical walls, confidentiality, and workflows built for the realities of legal practice, plus the enterprise-grade security and governance infrastructure that bar rules and, in many jurisdictions, the law itself require. Legora also employs a team of legal engineers, former practicing lawyers who deploy the system on-site, redesign workflows, and run change management. That is the same forward-deployed model the labs just paid billions to assemble, already running inside the harness.

Junestrand has every incentive to frame an Anthropic release as a tailwind rather than a threat, of course, no founder mid-raise publishes the opposite. But discount the optimism and the structural logic still stands on its own: the things he lists are real obligations, the legal engineers are a real cost center, and a model improving underneath all of it genuinely does raise the ceiling on what the harness can deliver. The incentive explains why he said what he said, but it doesn’t explain away what he described.

Anatomy of a Harness

Mollick is articulating the labs’ revealed preference: they don’t believe AGI/ASI is real enough yet to forgo investing in ways to build and distribute specialized intelligence. Value indeed lies between the model and the customer, and the labs themselves are investing significant amounts of precious capital to be present in that space.

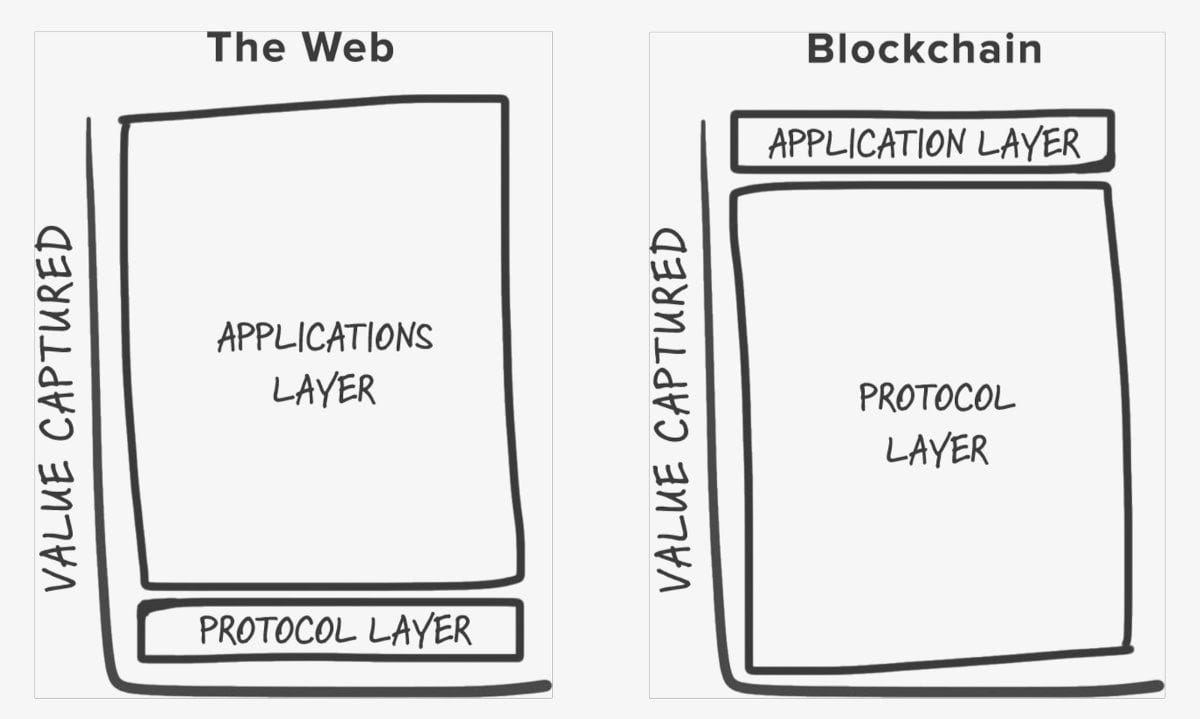

Another way to look at AI is this: is it the internet all over again, or is it “fat protocols” like crypto?

The internet era ran thin protocols and fat applications, with value captured upstack at Google, Meta, and Amazon. Joel Monegro argued in 2016 that crypto looked like the opposite: fat protocols, thin applications, value at the base (BTC, ETH, SOL, the primary “Layer-1s”).

Whether the labs get there first or not, it’s worth considering what “specialized intelligence delivered through a harness” actually consists of, and what might make it durable. Four qualities show up across the harnesses that have so far held their ground.

Encoded expertise. The expertise lives in the software around the model. Decision trees, routing, sequencing, tool selection, guardrails, conflict checks, citation logic, all built into the harness as deterministic infrastructure the model runs against. The VILA-Lab reverse-engineering of Claude Code v2.1.88 found 98.4% deterministic infrastructure and 1.6% AI decision logic across roughly 512,000 lines of TypeScript. Even Anthropic’s own flagship harness runs mostly on engineering.

This also includes model-specific craft: tool naming, prompt structure, capability boundaries, and the tacit conventions the lab encodes during training. Cursor’s engineering blog documents this kind of work continually. A harness tuned to one model is not portable to another without rework.

Proprietary data. The data that matters in any vertical belongs to the customer, not the harness and not the lab. The harness’s advantage is being the layer that makes that data usable: routing against it natively, turning a static asset into a live product surface the agent works through, rather than something reloaded into every session. Done well, this is not a feature the customer rents. It is an integration that deepens the longer it runs, and it is structurally hard for the closed labs to match, because their business routes every customer interaction back through their own infrastructure by default.

For what it’s worth, this is a good example of an opportunity space for a harness. Creating AI-native, airtight datarooms, sandboxes, and places for agents to collaborate safely on sensitive data will eventually be critical.

Workflow and interface specialization. The model needs to meet the practitioner where they work. This is where design, taste, subject-matter expertise, and even intuition can distinguish one product from another.

The labs default to chat as the lowest-cost interface. Chat covers everything decently, which is fine for a general-purpose model but insufficient for highly-effective specialized intelligence. This is why Claude Code and Claude Cowork look (and feel) different. AI-native, tailor-made ways of specializing intelligence will be created from day 1 with the end-user in mind (be they humans or agents), incorporating value-additive design choices throughout the product.

Accountable counterparty. Regulated buyers need someone whose name goes on the work product when it turns out to be wrong. Lawyers using hallucinated case law in the courtroom have no recourse with the model provider.

Harvey (legal) and OpenEvidence (medical) are leaders building early versions of this kind of solution.

In yet another example of Anthropic moving into this space, on May 12 they announced a dozen legal plugins for Cowork covering vendor-agreement review, bar-exam study, and integrations with DocuSign, Thomson Reuters, and Harvey itself.

The four qualities become a business when they get productized. A robust harness can be built once and configured for many customers (each of whom layers their own data, workflows, integrations, and staff training on top) or it can be tailored specifically for individual customers on an as-needed basis.

That’s the entire point behind the frontier labs’ push into different verticals, and even the labs themselves admit as much.

Applied AI engineers from Anthropic will work alongside the firm’s engineering team to identify where Claude can have the most impact, build custom solutions, and support customers over the long term.

FDEs will work closely with business leaders, operators, and frontline teams to identify where AI can make the biggest impact, redesign organizational infrastructure and critical workflows around it, and turn those gains into durable systems.

— OpenAI

Anthropic and rival OpenAI have spent much of the past year developing artificial intelligence tools to streamline a wider range of professional tasks, from financial services to health care, with the goal of courting more business customers and justifying their lofty valuations.

As memory, verification, and coordination continue to improve, so too will the best versions of these intermediate layers, thereby continuing to command a price premium. The labs build harnesses themselves when they can reach the buyer through the same flow with which they sell model access, like Claude Code and Cowork. They partner or buy when the buyer lies beyond that motion, for example with the joint venture “consulting” partnerships and the multiple different acquisitions.

And sometimes, they may even elect to refrain from participating. Therein lies an enormous opportunity for value creation and capture that many are missing.

The Moat

Will the frontier labs eat everything? I doubt it. The moat in AI is specialized, not general, intelligence.

The models are clearly the most important thing in AI and they make all of this incredible innovation possible. But model quality is separate and distinct from value capture, and models themselves are not necessarily where value accrues. The most likely candidate for a durable moat is the specialized intelligence built around the models (the “harness”) and how well it’s executed.

Some of that the labs are attempting to build themselves, some they’re partnering or buying their way into, and the rest they may well leave alone, because they can’t retain the experts, won’t carry the liability, or can’t afford to acquire the target.

That residual space, the part the labs aren’t entering, looks like where AI-native businesses have the best shot at finding durable ground. Even where the labs are present, there may be a “perfect storm” combination of things that make a particular instantiation of a harness extremely attractive nevertheless.

It’s incredibly early, and it’s normal we don’t quite know what ultimate form the winners will take.

What I don’t think they’ll look like is better SaaS. It’s tempting to frame the harness as the next generation of vertical SaaS, configured per customer and designed with agents in mind. But that framing is too gentle. The SaaS-era moat was that the workflow lived in the interface, the switching cost was muscle memory, training, and daily use. That moat is already gone. When the workflow moves into the harness and the agent does the work, the incumbent’s interface no longer matters. The system of record and the system of action collapse into one layer, and it isn’t the incumbent’s layer.

So the honest version is harder than “next-generation vertical SaaS.” The best harnesses don’t upgrade the incumbent. They delete the reason the incumbent was hard to replace. The logo may survive for a while, especially in verticals where regulation, data gravity, or integration sprawl slow things down, hollowing-out is uneven and in some industries it takes a decade. But the defensibility goes first, and it goes fast.

Which raises the obvious objection: if a model generation can dissolve a thirty-year incumbent’s moat that fast, what stops the next model generation from dissolving the harness’s moat just as fast? It’s the right question, and the answer is that not all moats decay the same way. The SaaS interface moat was static. It was a wall built once, and once the workflow moved off the interface the wall just stood there, defending nothing.

The harness moat, built well, is not static. Proprietary data routed natively against the model accumulates, the harness knows more about the vertical every quarter. The accountable-counterparty relationship deepens with every matter the harness handles and every audit it survives. The workflow specialization compounds as the harness absorbs more edge cases than a generalist ever sees. A better model improves all of this rather than erasing it, because the moat was never “the thing the model couldn’t do.” It was the context, the data, the liability, and the trust that the model runs against.

The harnesses that get sherlocked are the ones whose only moat really was a temporary capability gap.

The harnesses that last are the ones whose moat compounds while the model improves underneath it.

I do, however, believe the best versions of these companies will end up as something genuinely new: configured per customer, designed with agents in mind as the primary user, and compounding in utility and value over time in a way the software they replace never could.