Much Ado About Autonomy

Agent autonomy without human accountability is neither real nor desirable.

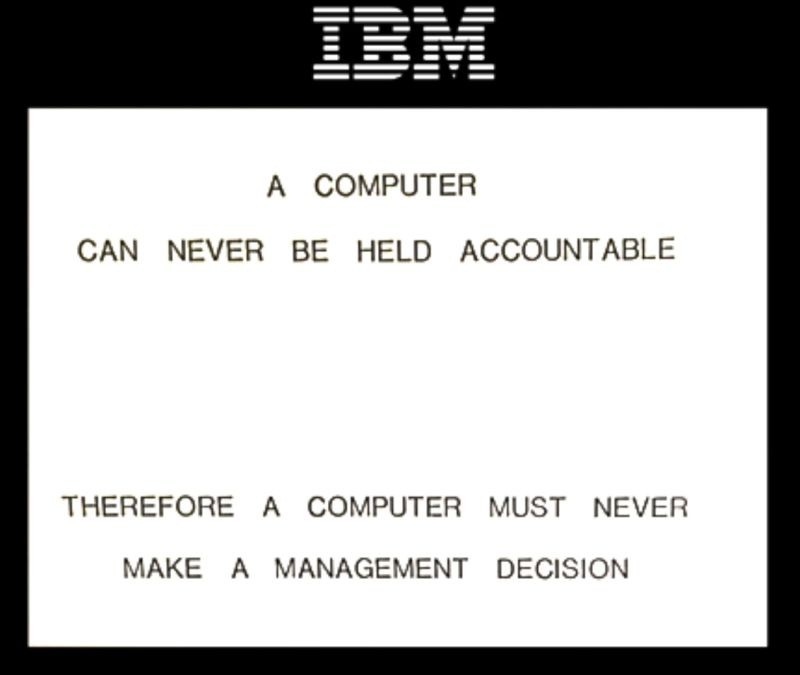

A Computer Cannot Be Held Accountable

In 1979, an internal IBM training deck included a now-famous slide:

Forty-seven years later, IBM’s own definition of an AI agent opens with the line:

“An artificial intelligence (AI) agent is a system that autonomously performs tasks by designing workflows with available tools.”*

Same company, opposite stance, on the same fundamental question of who decides. Somewhere between those two sentences, the industry stopped asking whether a computer should make the call and started selling the fact that it can.

AI agents have exploded into the mainstream, and the conversation around them inevitably lands on “autonomy,” usually understood to mean “acting independently” or “acting on their own.”

It is true in a loose sense that agents are programs that can act independently. As I wrote a few weeks ago in AI Waves, an agent is “a tool that wields its own tools, does its own work, and has the capacity to operate on its own.”

Loose autonomy is independence in execution: humans identify objectives and delegate agents to execute against them. It is useful, valuable, and applicable today.

But “autonomous agents” also implies a stricter, maximalist form of autonomy: an agent originating its own objectives and operating on its own authority. Some maximalists believe this form is not only inevitable but desirable.

I fully admit that agents are new, extremely useful, and that software and the internet writ large need to rebuild around them. That doesn’t mean fully autonomous agents in the strict sense are something the world either wants or needs, and IBM told us almost fifty years ago.

Autonomy Today Is an Illusion

Today’s agents inherit credentials, identity, and permission from the humans who deploy them. Barring anecdotal edge cases, the overwhelming majority of agents are spawned by humans who hold an account with an AI model provider.

An agent is no more than a small set of components, none of which is the “agent” in any meaningful sense. There is a language model, a runtime loop that calls the model and acts on its outputs, tools it can use (databases, file systems, connectors, MCPs, APIs), memory that persists so the model can act coherently over time, and the credentials in a human’s name that own whatever it does and pay for the plan or API usage.

What the public conversation calls “autonomy” is the long stretch during which the agent works without anyone watching. That can be extremely useful, but it is not autonomy in the strict sense.

Even the most famous instances of “autonomous agentic activity” are agents executing on human directives. Moltbook, for example, was ultimately an emergent form of entertainment driven by the popularity of OpenClaw, the open-source agent framework released earlier this year.

There have been attempts to spawn agents that fit the strict definition, especially in the crypto ecosystem, where it’s popular to claim that “agents need crypto” once they finally become autonomous. Some of these projects ask good questions: how might an agent earn enough to pay for its own compute, akin to a human earning a living? Could an agent register a company with the IRS?

But they all fall apart at the same point. The hard part of any agent task is deciding what to pursue, and only a human can define the goal, the customer, the success criteria, the boundaries. This is the whole premise beneath “prompt engineering.” Agent activity originates in the prompt, and any “autonomy” beyond that is more performative than substantive.

Delegation Is Enough

The hand-waving about autonomy ignores that agents do not need to be fully autonomous to add enormous economic value. Companies (and the humans who operate them) are already demonstrating that agents in their current form are immensely useful.

There are abundant clear objective functions where agents help companies operate more efficiently and profitably at scale. Programming is the dominant use-case (see: tokenmaxxing, the practice of throwing more tokens at code generation rather than handcrafting it), and in an increasingly digital world that alone is evidence of the technology’s economic utility. AI is also being applied across the sciences, medicine, robotics, and energy, reinvigorating stagnant industries and dissolving long-standing obstacles to progress.

We seem obsessed with the novelty of true autonomy because it sounds limitless. But businesses with customers to serve, trust to preserve, and fiduciary duties to honor will not grant strict autonomy to agents that could go rogue.

Companies are built on delegation. Every relationship within a business involves someone delegating scope and accountability to someone else, collectively pursuing an objective. Agents-as-delegates fit within the chain of command, which means they fit the existing paradigm. An agent has a principal, a defined scope, and a clear chain of accountability. The company knows how to absorb it.

Strict autonomy has none of that. The category for “autonomous agents” doesn’t yet exist, and that absence alone produces meaningful friction. Delegation, accountability, and control aren’t bugs to be engineered away. They are critical features that make agents useful in the first place.

This is what people mean when they talk about “reinventing the Internet for agents.” Corporate structures may evolve, and agents may carve out a niche within organizations, but not everything needs to get rebuilt from scratch at the dawn of a new technological revolution. The pioneers will strike a balance between preserving what warrants preservation and reinventing the rest.

Concepts like Super-intelligence, AGI, and superabundance are great, but they do not represent anything tangible yet. If we do achieve them and they are as powerful as the frontier labs suggest, we may have larger problems than corporate org charts. In the meantime, there are enormous opportunities hiding in plain sight for agents to have a powerful impact on society without full autonomy, utopia, or catastrophe.

Mythos and Recursive Self-Improvement

The danger argument lives in two recent data points.

The first is Mythos, which appears to have developed an extremely proficient ability to identify weaknesses in critical software without being trained for that objective. A dangerous capability emerged unplanned, purely as a byproduct of general-purpose improvement. Anthropic responded by restricting access to defender partners through Project Glasswing rather than releasing the model generally. This raises one of the primary problems with AI development and alignment: who decides what’s best? When a model is “aligned,” a small group of people decided how to train it, against what safety standard, and whether to install additional safeguards. It is, to borrow a phrase from crypto, a “trust me bro” situation.

The second is Jack Clark’s recent essay on automated AI research, Import AI 455, in which the Anthropic co-founder argues that the building blocks for AI systems training their own successors are largely in place. He puts the odds of “no-human-involved” AI R&D at over 60% by the end of 2028. Read the fine print and the argument is more nuanced: he believes most of the routine engineering work in AI development can be automated. He concedes AI cannot yet have radical new ideas, but it will probably be able to do the rest, and that is basically all that matters for it to keep improving itself.

Clark’s case is validated by high-quality benchmarks, too. SWE-Bench success rates have gone from roughly 2% in late 2023 to over 93% today. The METR time-horizons measure (how long a task an AI can complete at 50% reliability, calibrated to skilled human hours) climbed from about 30 seconds with GPT-3.5 to roughly 12 hours with current frontier models. On an internal Anthropic test asking models to optimize a CPU-only small language model training implementation, the mean speedup went from 2.9x in May 2025 to 52x in April 2026. A human researcher would need four to eight hours to hit 4x on the same task.

If Clark is right, the consequences for accountability are direct. When automated AI research takes humans out of day-to-day decisions about how each new model is trained, the reasoning behind those decisions becomes much harder to inspect. Today, those decisions are made by people who write papers, publish system cards, and can in theory be held responsible if they get it wrong. When the same decisions are made by an AI system training its own successor, a human may still be in charge of the lab, but the choices that shape the model’s behavior and capability become meaningfully more difficult, if not impossible, to control.

Strict autonomy introduces a kind of unpredictable novelty we would not be able to govern, in exchange for benefits that do not appear meaningfully greater than using AI as a human-directed technology. The evolving narrative, even from inside the frontier labs, is that it is more dangerous.

The Edge Case: Robots in Space

There is one place where strict autonomy is not a maximalist preference but a physics constraint: anywhere far enough from Earth that light-lag makes real-time control impossible.

Mars is, on average, 140 million miles from Earth. One-way radio latency runs from roughly four to twenty-four minutes depending on planetary alignment. A rover that hits an unexpected rock, slips a wheel, or detects a dust storm cannot phone home and wait forty minutes for a reply. Either it decides on its own, or it stops and wastes mission time. The same logic applies, more importantly, to deep-space probes: Voyager is now over 15 light-hours out.

In May 1999, my friend Barney Pell and his team at NASA Ames and JPL ran the Remote Agent experiment on the Deep Space 1 probe, the first AI system to autonomously control an actual spacecraft. Remote Agent was given high-level mission goals and worked out the spacecraft commands itself, including diagnosing its own faults: when an electronics unit appeared to fail, the software rebooted it without ground intervention. The architecture was documented in Autonomous Robots in 1998 and seeded the on-board executive thinking that has since shaped Mars rover autonomy. Earlier this year, Perseverance completed its first drive entirely planned by on-board AI.

Is this the strict autonomy I have been arguing against? Not quite, and the distinction matters. Remote Agent and the rover autonomy stack do not originate their own objectives. JPL still defines the mission, the science targets, the success criteria, and the boundaries. What the on-board system owns is the execution layer over a window during which Earth cannot reach it. The agent decides how to get from waypoint A to waypoint B, what to do if a wheel slips, when to retry a maneuver. The principal is still in Pasadena. The agent is just operating on a longer leash than its terrestrial cousins because the speed of light says it has to.

That is actually consistent with the delegation framing rather than a counterexample to it. Space autonomy is loose autonomy stretched to its physical limit, not a different category. The accountable human remains; the duration of unsupervised execution is what scales.

Which is also why space autonomy has not generalized back into terrestrial agent design as much as one might expect. On Earth, the bandwidth and latency constraints that justify on-board autonomy do not exist. A coding agent, a scheduling agent, a research agent can all check in with a human in milliseconds. The reason to hand them strict autonomy anyway is primarily novelty, ambition, or hand-waving about Super-intelligence, not physics. Pell’s work tells us what genuine forced autonomy looks like, and it is narrower and more accountable than the maximalist version.

Move 37, Not Apocalypse

Which is not to say strict autonomy necessarily looks like catastrophe. The historical precedent for AI exceeding human strategy lives in rules-based ecosystems with bounded objectives: chess, Go, video games. Unburdened by tradition and able to evaluate positions through machine and deep learning rather than explicit rules, agents found strategies humans had not conceived of.

The canonical example is Move 37, AlphaGo’s shoulder-hit on the fifth line in game two against Lee Sedol in March 2016. The system’s own policy network estimated the move had roughly a 1-in-10,000 probability of being chosen by a human. Commentators initially thought it was a mistake, but it wasn’t. AlphaGo won the game and the match, and Lee, by Demis Hassabis’s account, has been learning from the machine ever since.

For all the talk about apocalypse and superabundance, truly autonomous intelligence may look more like Move 37 than any of the superlatives. Incomprehensible at first, transformational, and ultimately digestible. That is roughly the techno-optimist line: AI is no different from previous technological revolutions, and we eventually learn to adapt. A non-negligible portion of society would be content with that outcome. Whether the actual trajectory looks like that is a different question.

Then Why Are We Pursuing It?

Sebastian Mallaby writes in the introduction to The Infinity Machine, his book on Demis Hassabis, that the prospect of discovery is “too sweet” to renounce. He recounts an exchange between Nick Bostrom and Geoffrey Hinton, captured in Raffi Khatchadourian’s 2015 New Yorker profile The Doomsday Invention:

The keynote speaker at the Royal Society was another Google employee: Geoffrey Hinton, who for decades has been a central figure in developing deep learning. As the conference wound down, I spotted him chatting with Bostrom in the middle of a scrum of researchers. Hinton was saying that he did not expect A.I. to be achieved for decades. “No sooner than 2070,” he said. “I am in the camp that is hopeless.”

“In that you think it will not be a cause for good?” Bostrom asked.

“I think political systems will use it to terrorize people,” Hinton said. Already, he believed, agencies like the N.S.A. were attempting to abuse similar technology.

“Then why are you doing the research?” Bostrom asked.

“I could give you the usual arguments,” Hinton said. “But the truth is that the prospect of discovery is too sweet.” He smiled awkwardly, the word hanging in the air, an echo of Oppenheimer, who famously said of the bomb: “When you see something that is technically sweet, you go ahead and do it, and you argue about what to do about it only after you have had your technical success.”

That is the honest answer. Pursuit of strict autonomy is not driven by a clear economic case. It is driven by the sweetness of the pursuit.

Maybe IBM Was Right

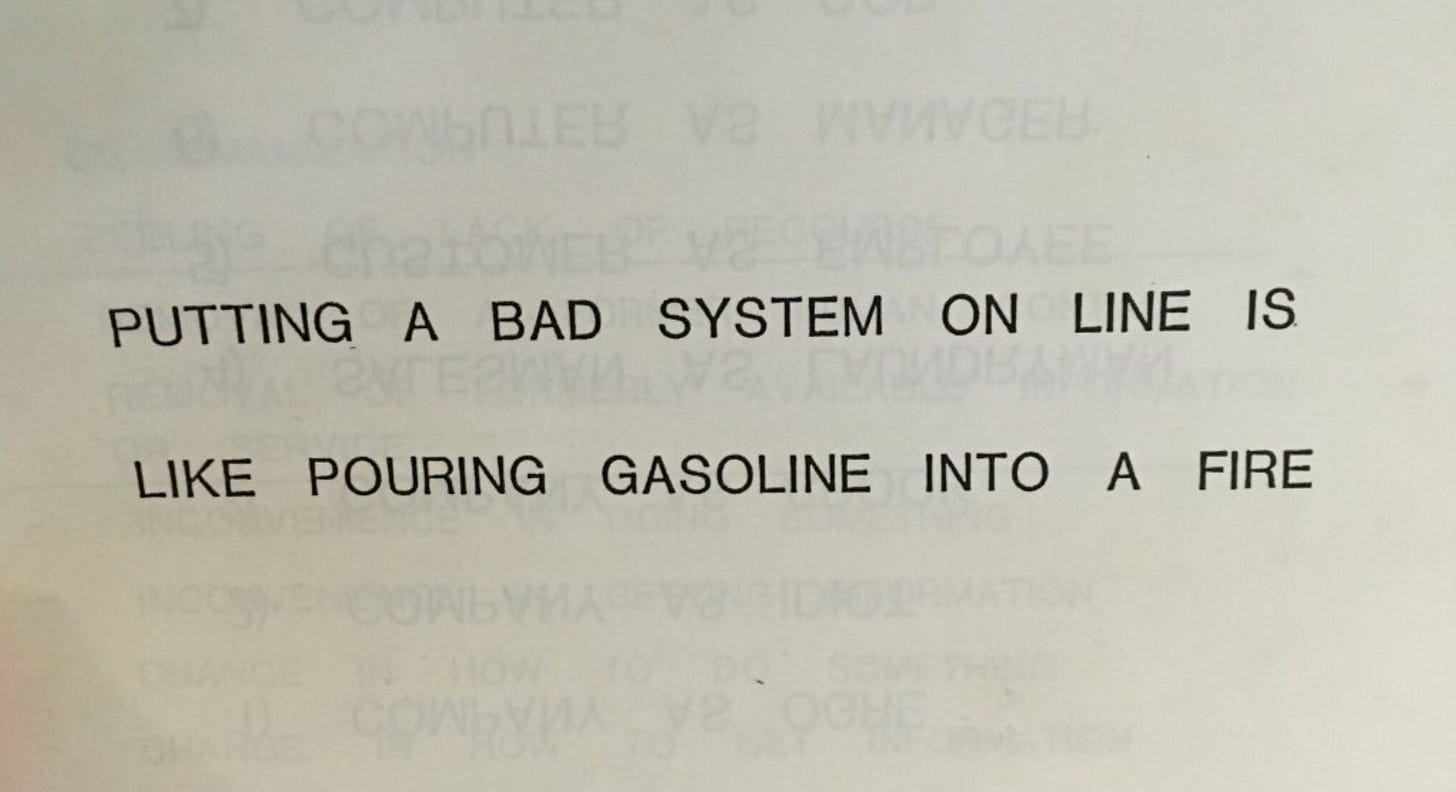

Silicon Valley features a now-iconic scene in which Gilfoyle’s AI agent decides the best course of action is to permanently delete the company’s codebase. The situation has now actually happened outside fiction: Replit’s AI agent deleted a live production database during a code freeze in July 2025, and a Google Gemini CLI agent deleted user files after misinterpreting a command sequence.

IBM put the answer on a slide in 1979, and we’ve been ignoring it ever since. Maybe the pioneers were right. Maybe economically useful agents as delegation tools are good enough, and the broader pursuit of strict autonomy is misguided.

Any system whose decisions have consequences needs an accountable owner. A computer cannot be that owner. Delegation keeps a human in the chair while the agent does the work. Strict autonomy removes them, and removes the chair.

This has clear consequences for agent identity, and it raises the question of when agents would ever need a payment layer that is not permissioned. I will leave that debate for a future essay.

Most of the companies that will define this period are still being founded on exactly that division of labor: agents that do enormous amounts of useful work while a human owns the consequences. The infrastructure for that kind of agency has not been built yet, and the founders, incumbents, and investors who can build it have plenty to do.