Root Access: OpenClaw, Red Hat, and Palantir

The lobsters are coming for you

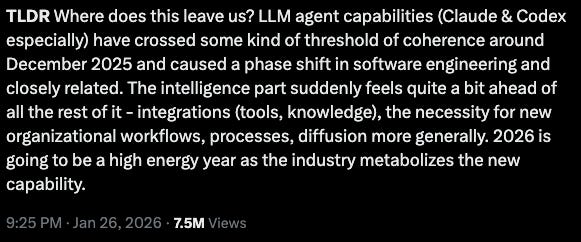

2025 was supposed to be the “year of the agents.” We only missed by 11 months.

As Karpathy wrote, agents actually crossed the chasm in ~November/December of 2025.

What’s more? As Ethan Mollick notes, the AI labs have generally been right. It is extremely tempting to judge the labs and their predictions almost instantly (see Dario’s prediction that AI will render knowledge work obsolete in the near future…). It doesn’t help that enormous amounts of money depend on these judgments and their accuracy, either… but maybe we should *try* thinking a bit longer-term.

OpenClaw

In any case, by all accounts, 2026 will, in fact, be the year of the agents, and Exhibit A is OpenClaw.

Much has already been written about OpenClaw. I will not be contributing another explainer. For a comprehensive overview, please see here.

If you aren’t already aware, OpenClaw is an open-source, autonomous agent created by Peter Steinberger that runs locally on your machine, connects to your messaging platforms – WhatsApp, Slack, Telegram, Discord, iMessage, Signal, Teams, etc – and operates as a persistent daemon: always on, always listening, always acting.

Apparently, Steinberger was personally losing $10,000 to $20,000 per month to keep it running. In any case, it’s no exaggeration to say that it has ROCKED the AI world, in every possible way. Reactions range from measured enthusiasm because agents finally demonstrated utility to the typical breathless excitement over technology deemed “life changing.”

Although creating it is an immense achievement worth recognizing, the core technology isn’t actually revolutionary. It’s a well-designed wrapper connecting an LLM to local tools and services. What made it take off is that it put familiar pieces together in a way that felt, for the first time, like having a genuine digital assistant rather than a chatbot you open and close. It’s what everyone was hoping for in 2025.

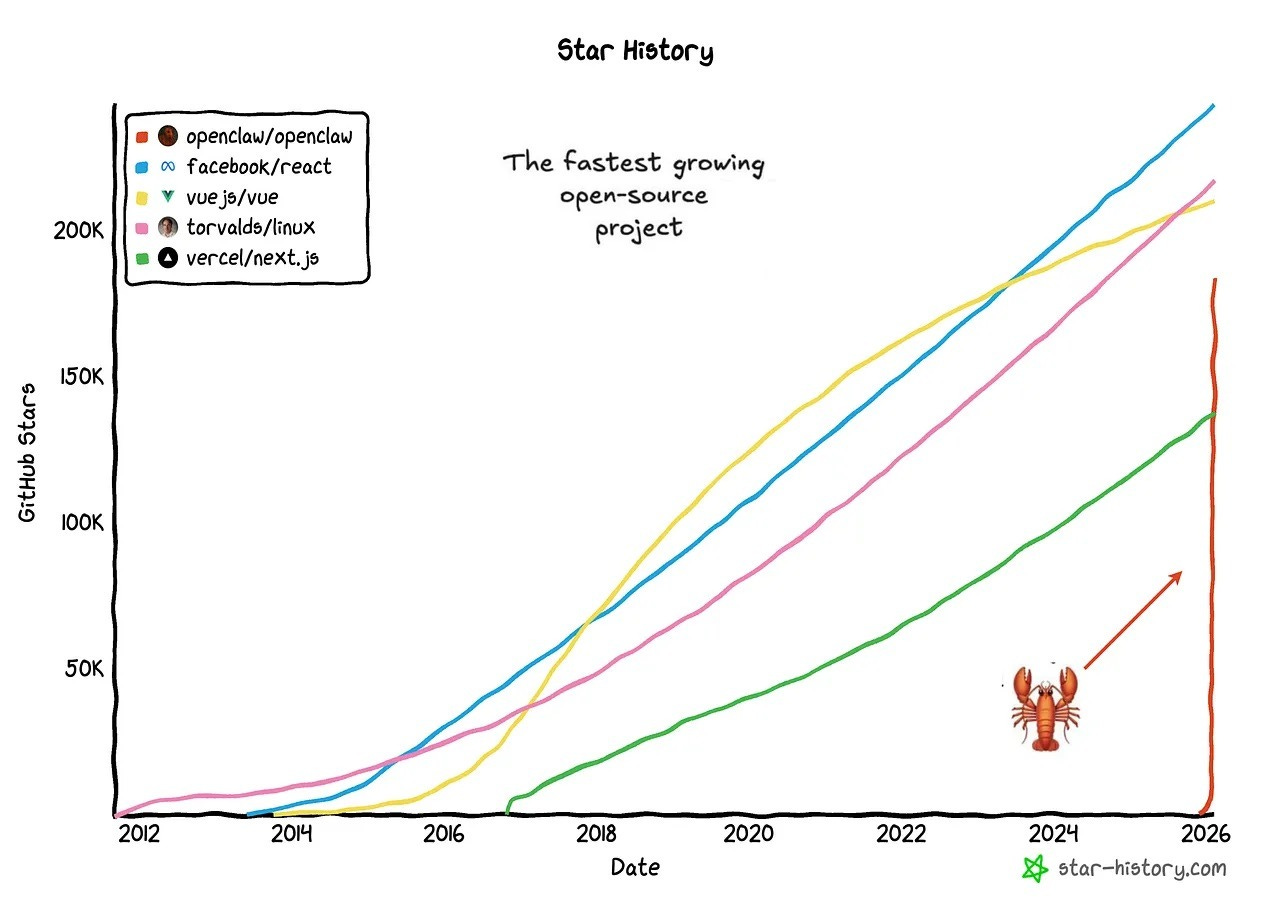

As such, traction and usage exploded. To speak of product-market-fit is a dramatic understatement: as with nearly every popular AI-native invention, adoption curves don’t resemble anything else we’ve ever seen in history. OpenClaw crossed 100,000 GitHub stars faster than React, TensorFlow, or Kubernetes. The adoption curve is literally vertical.

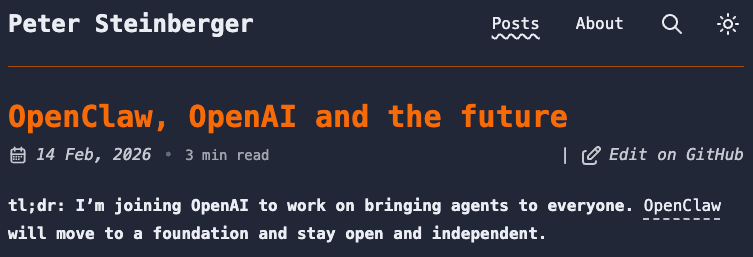

There is perhaps no better demonstration of its importance in the AI landscape than the end to the beginning of this fascinating development: joining OpenAI. Yupp! Sam hired him within 3 months.

The Problem

As you can imagine, with great power comes great responsibility, and most people don’t care about the responsibility part.

Part of the reason OpenClaw is so effective is that it operates with the full privileges of its host user. That means shell access, file system read/write, OAuth credentials for connected services, and any digital access keys a user is willing to give it. For that reason, users began buying out dedicated Mac Mini computers for a “clean” environment on which to run their instance of this software.

Meredith Whittaker, President of the Signal Foundation, had already articulated the fundamental tension involved with giving agents root access to computers at SXSW last year.

Nevertheless, tens of thousands of people gave an autonomous AI agent root access to their computers.

Source: https://www.wired.com/story/malevolent-ai-agent-openclaw-clawdbot/

VentureBeat put it best: “OpenClaw proves agentic AI works. It also proves your security model doesn’t.” As a quick overview of the security hazards involved 😂 :

Security researchers found 40,000 exposed OpenClaw instances on the public internet

BitSight deployed honeypots and saw exploitation attempts within minutes.

Because SOUL.md – the file defining the agent’s identity – is writable, prompt injection attacks can achieve persistence: an attacker tricks the agent into rewriting its own instructions, creating a backdoor that survives restarts.

~7% of the skills available on Clawdhub were found to be malicious – installing stealer malware that harvests browser passwords, crypto wallets, and session cookies

And much, much more…

CrowdStrike released an enterprise-wide security framework for OpenClaw. Cisco’s security team called it “groundbreaking” from a capability perspective and “an absolute nightmare“ from a security perspective.

The Opportunity

All of which is to say there’s an opportunity here. For what it’s worth, I’ve seen this before. At Sun Microsystems, proprietary infrastructure migrated to commodity hardware and Linux. When that happened, companies like Red Hat built extremely valuable businesses implementing frontier software for enterprises.

In fact, enterprises will pay enormous sums for someone to make free software reliable, secure, and compliant.

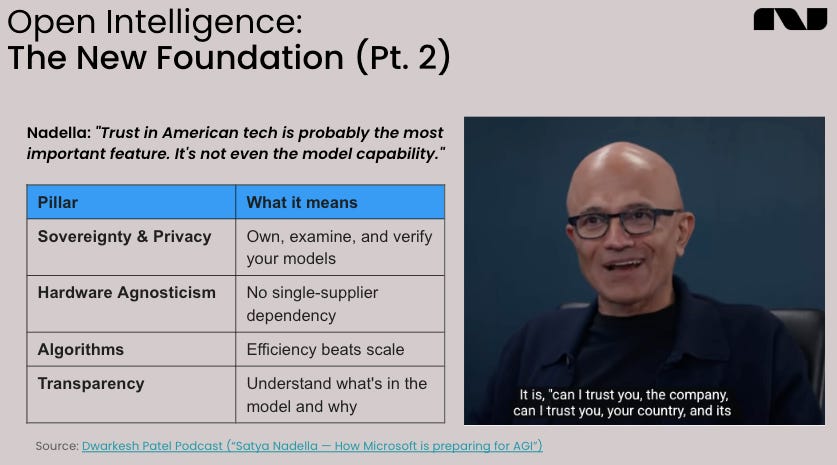

Chamath mentioned as much on the All-In podcast a week ago, saying that “on-prem is back:”

“Once you use these tools, it is very difficult for a company to be able to control how their data is used subsequently thereafter… If you’re using a set of agents to act on all that information, all those agent traces are going back to these model builders... When they realize that that is a problem, the enterprise will have to decide, do I just give up and keep running all of this stuff in the cloud in a shared experience or do I bear the incremental cost of running this stuff in a more coordinated manner that I control on prem?”

Supporting his claim, Chamath pointed to Judge Jed Rakoff of the Southern District of New York’s ruling in United States v. Heppner that said “documents generated through a consumer version of Anthropic’s Claude AI were not protected by the attorney-client privilege or the work-product doctrine under the circumstances presented.”

There is also data suggesting that 86% of CIOs now plan to move some workloads back on-premise, and Lenovo’s 2026 TCO analysis found on-premise AI inference achieves breakeven in under four months versus cloud.

So is there an AI-native version of Red Hat? (Reach out if you’re building this!) We think it may involve something like Palantir’s “Forward Deployed Engineer” model. For a brilliant breakdown of the FDE, see Marty Cagan’s piece here. There are a few highlights:

What makes Palantir’s model work: FDEs build prototypes on top of Palantir’s platform. A platform product team then generalizes what FDEs learn across clients into reusable capabilities. This prevents bespoke sprawl while keeping the deep customer intimacy.

The broader principle: send your best engineers to sit with multiple customers, see the problem space firsthand, and discover solutions that serve real needs across them. This is customer discovery at its most effective.

It goes without saying that there’s lots of data demonstrating that this model works, too (see $PLTR’s market cap, their margins, and their growth). Palantir’s FDEs embed directly with clients to configure and deploy their own software against real operational problems, but it’s no stretch to imagine some cracked team of devs organizing to form a modern form of elite AI-consulting configuring bespoke on-prem instances of the latest AI. In fact, Cagan breaks down how and why traditional consulting models (specifically Accenture) break down when faced with AI.

As such, the AI-native, Red-Hat-meets-Palantir model could become especially valuable because understanding the customer environment in the context of AI is more complex and critical than ever.

To be clear, that model is as follows:

Red Hat for the software layer

Open-source, or commodity agent frameworks as the core, enterprise subscriptions for security, compliance, and support

Palantir (the FDE framework) for deployment

Embedded engineers who configure agents against specific data, workflows, and regulatory constraints

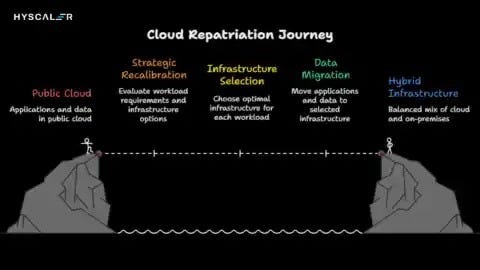

Cloud isn’t dying, either. What’s happening is selective repatriation: enterprises are moving their most sensitive, high-utilization AI workloads on-premise while keeping burst and experimental workloads in the cloud. But for many organizations (including nations!), control is all that matters. As autonomous agents handle more and more proprietary data, the trend is clear.

Nazaré’s Position

The industry’s consensus remains scale – more compute, larger models, bigger data centers, etc. But other problems exist, the investment space is less crowded, and returns are therefore (much) more attractive. The “who, what, where, why, and how” for AI is critically important, especially within real organizations.

OpenClaw means that 2026 will be the year of agents. We know that models like those of Red Hat and Palantir for enterprise open-source and embedded deployment are feasible and lucrative.

We’ve believed for some time now that, among many other opportunities in AI, the company that configures, deploys, secures, and maintains autonomous AI agents on sovereign infrastructure will build one of the most important businesses of the next cycle.

If you’re working on that, we’d love to hear from you.