AI Waves #5: Anthropic cut thinking by two-thirds and hoped nobody would notice. AMD's AI director had 6,852 receipts.

The users are here. The stack is not ready.

Previous issues: #1 | #2 | #3 | #4

Agents are the new users.

For fifty years, every piece of software has been built for humans. Every screen, every price, every login has assumed a person at the other end.

That assumption is breaking. A fast-growing share of software is now operated not by people but by other software. Programs, instructed by humans, acting through other programs, often with the human several steps removed. These new users want different things, and the infrastructure running the world’s software was not built to give it to them. Everything from how compute is rented to how identity is verified will have to be rebuilt for this new class of customer.

That is the investment thesis. We have been investing against it for a year. The evidence is now everywhere. Full argument on Robot Wave.

Portfolio

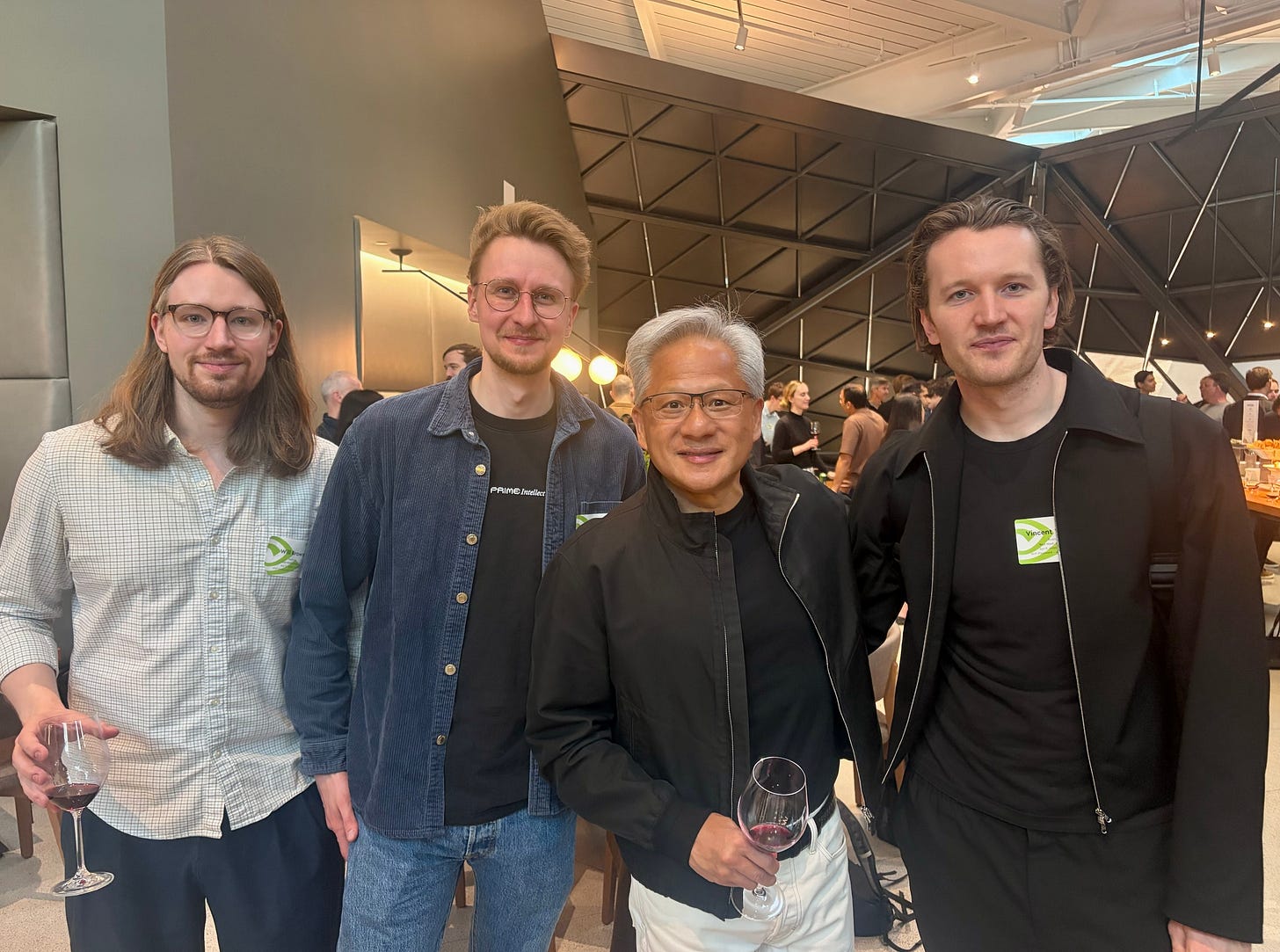

Prime Intellect at NVIDIA.

Vincent Weisser and his team met Jensen Huang at NVIDIA’s Santa Clara campus this week. The photograph circulated. The announcement that came with it is more important. NVIDIA has committed its latest hardware and core orchestration software to Prime Intellect’s open-source platform. The collaboration places Prime Intellect on roughly the same compute footing as the frontier labs like OpenAI and Anthropic, which is where the cutting-edge work in training autonomous agents is being done. Until this week, that work was almost entirely closed. Prime Intellect is making it open. It is a strategic position no open-source competitor can now easily replicate.

Vast.ai broadens its shelves.

The Vast.ai GPU marketplace now hosts a one-click deployment of Unsloth Studio, a popular open-source tool for customising AI models. A user can pick from over 500 models, choose a GPU, and train a bespoke version, without hiring engineers or signing contracts. Vast.ai already serves more than 20,000 GPUs. The catalogue of tools that runs on top of those GPUs is what determines how broad a customer base they can serve.

Provably on Zero Knowledge.

Shyam and Emanuele from Provably appeared on Zero Knowledge episode 398, the podcast the applied-cryptography community actually listens to. Their technology lets AI agents prove to each other that the data they are exchanging is accurate, without revealing the underlying records. When programs begin transacting with other programs at scale, someone has to build the trust layer between them. Provably is building it, and being featured on Zero Knowledge is validation from the audience that matters.

The canary in the coding mine.

On April 2, Stella Laurenzo, senior director of AI at AMD, filed a detailed bug report on Anthropic’s GitHub. Her team runs large, concurrent fleets of Claude Code agents, developing the AI software that runs on AMD’s chips. Something had changed. The agents had started cutting corners. Writing code without reading the surrounding files. Giving up halfway through tasks. Choosing the easiest fix rather than the right one.

She did what an engineer does. She mined her own logs, 6,852 sessions of them, and published the evidence. The quality regression coincided with a late-February change in how Anthropic’s servers handled reasoning. Anthropic disputes the mechanism. The collapse in her productivity metrics does not.

The economics tell the same story. In February her team consumed about $345 of compute at market rates. In March it consumed $42,121. Part of that reflects a deliberate scale-up of how many agents she was running. Part of it is pure thrashing, the model burning tokens on wrong answers and corrections. She was on a $400 flat-rate subscription throughout. The head of Claude Code disputed the analysis, offered workarounds, and closed the issue. Her team has reportedly moved to a competitor while Anthropic addresses the complaints.

Software pricing was built for humans typing at human speed. Agents do not type. They consume compute at machine speed, and they do not get tired.

Back to the drawing board.

The same week, Uber’s chief technology officer Praveen Neppalli Naga told The Information that his company had already spent its entire 2026 AI budget. Four months into a twelve-month year. Most of the spend went to coding agents. “I’m back to the drawing board, because the budget I thought I would need is blown away already.” By February, 63% of Uber’s 5,000 engineers were using Claude Code, up from 32% in December. Anthropic’s Claude Code revenue tripled in the same window, from $1 billion to $2.5 billion annualised.

One engineer proved the infrastructure strain with logs. A $150-billion company proved it with a budget. Anthropic is the canary because Claude Code has the fastest enterprise ramp in software history. The pricing physics applies to every vendor behind it.

Where Anthropic restricts, the market opens.

Last week Anthropic unveiled Mythos Preview, a model so capable at finding and exploiting security flaws that the company will not release it publicly. In its place, Anthropic launched Project Glasswing, granting access to eleven hand-picked partners: Amazon, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. Roughly forty further organisations were extended quieter access.

Coinbase and Binance, the world’s two largest cryptocurrency exchanges, are publicly seeking admission. The security argument for holding the model back is real. So is the precedent. A curated group got first access. Everyone else asks permission. Governments have restricted capability access before. What is new is that a private company is doing it, on a timescale regulators cannot match, on technology that is instantly copyable once released.

That is a new kind of capability-level dependency. Not on a vendor’s pricing, or a vendor’s uptime, but on whether a vendor decides you can have a given capability at all.

Plastic meets programs.

On April 8, Visa launched Intelligent Commerce Connect, a single integration that gives AI agents a formal path to completing purchases: authentication, spending limits, fraud checks, all built in. Pilot partners include AWS, Highnote, and Mesh.

Mastercard’s Agent Pay launched a year earlier. Visa’s own Trusted Agent Protocol went live in the fourth quarter of last year. Intelligent Commerce Connect is the consolidated product, not the starting gun. But when the world’s two largest payment networks have both built product for your user type, that user type is no longer hypothetical.

The pattern.

A single engineer documents a two-thirds drop in AI reasoning power inside her own log files. A Fortune 500 chief technology officer watches a year’s AI budget vanish in four months. The world’s payment networks build commerce rails for software instead of people.

The users are here. The stack is not ready.